Empathy, Resilience, Innovation, and Speed: The Blueprint for Intelligent Healthcare Transformation

Forrester’s recent report, Becoming An Intelligent Healthcare Organization Is An Attainable Goal, Not A Lost Cause, confirms what healthcare executives already know: transformation is no longer optional.

Perficient is proud to be quoted in this research, which outlines a pragmatic framework for becoming an intelligent healthcare organization (IHO)—one that scales innovation, strengthens clinical and operational performance, and delivers measurable impact across the enterprise and the populations it serves.

Why Intelligent Healthcare Is No Longer Optional

Healthcare leaders are under pressure to deliver better outcomes, reduce costs, and modernize operations, all while navigating fragmented systems and siloed departments. Forrester’s report identifies four hallmarks of intelligent healthcare organizations, emphasizing that transformation is not a destination but a continuous practice.

Breaking Through Transformation Barriers

The journey to transformation requires more than technology; it demands strategic clarity, operational alignment, and a commitment to continuous improvement. Forrester reports, “Among business and technology professionals at large US healthcare firms, only 63% agree that their IT organization can readily reallocate people and technologies to serve the newest business priority; 65% say they have enterprise architecture that can quickly and efficiently support major changes in business strategy and execution.”

Despite widespread investment in digital tools, many healthcare organizations struggle to translate those investments into enterprise-wide impact. Misaligned priorities, inconsistent progress across departments, and legacy systems often create bottlenecks that stall innovation and dilute momentum.

These challenges aren’t just technical or organizational. They’re strategic. Enterprise leaders can no longer sit on the sidelines and play the “wait and see” game. They must shift from reactive IT management to proactive digital orchestration, where technology, talent, and transformation are aligned to business outcomes.

Business transformation is not a fleeting trend. It’s an essential strategy for healthcare organizations that want to remain competitive as the marketplace evolves.

Four Hallmarks of An Intelligent Healthcare Organization (IHO)

To overcome these barriers, healthcare organizations must align consumer expectations, digital infrastructure, clinical workflows, and data governance with strategic business goals.

1. Empathy At Scale: Human-Centered, Trust-Enhancing Experiences

A defining trait of intelligent healthcare organizations is a commitment to human-centered experiences. This is driven by a continuous understanding of consumer needs and supported by strategic technology investments that enable timely, personalized interventions and touchpoints. As Forrester notes, “The most intelligent organizations excel at empathetic, swift, and resilient innovation to continuously deliver new value for customers and stay ahead of the competition.”

Empathy is more than a design principle. It’s a performance driver. Organizations that prioritize human-centered care see higher engagement, better adherence, and stronger loyalty.

Our experts help clients reimagine care journeys using journey sciences, predictive analytics, integrated CRM and CDP platforms, and cloud-native architectures that support scalable personalization. But personalization without protection is a risk. That’s why empathy must extend beyond experience design to include ethical, secure, and responsible AI adoption.

Healthcare organizations face unique constraints, including HIPAA, PHI, and PII regulations that limit the utility of plug-and-play AI solutions. To meet these challenges, we apply our PACE framework—Policies, Advocacy, Controls, and Enablement—to ensure AI is not only innovative but also rooted in trust.

- Policies establish clear boundaries for acceptable AI usage, tailored to healthcare’s regulatory landscape.

- Advocacy builds cross-functional understanding and adoption through education and collaboration.

- Controls implement oversight, auditing, and risk mitigation to protect patient data and ensure model integrity.

- Enablement equips teams with the tools and environments needed to innovate confidently and securely.

This approach ensures AI is deployed with purpose, aligned to business goals, and embedded with safeguards that protect consumers and care teams alike. It also supports the creation of reusable architectures that blend scalable services with real-time monitoring, which is critical for delivering fast, reliable, and compliant AI applications.

Responsible AI isn’t a checkbox. It’s a continuous practice. And in healthcare, it’s the difference between innovation that inspires trust and innovation that invites scrutiny.

2. Designing for Disruption: Resilience as a Competitive Advantage

Patient-led experiences must be grounded in a clear-eyed understanding that market disruption isn’t simply looming. It’s already here. To thrive, healthcare leaders must architect systems that flex under pressure and evolve with purpose. Resilience is more than operational; it’s also behavioral, cultural, and strategic.

Perficient’s Access to Care research reveals that friction in the care journey directly impacts health outcomes, loyalty, and revenue:

- More than 50% of consumers who experienced scheduling friction took their care elsewhere, resulting in lost revenue, trust, and care continuity

- 33% of respondents acted as caregivers, yet this persona is often overlooked in digital strategies

- Nearly 1 in 4 respondents who experienced difficulty scheduling an appointment stated that the friction led to delayed care, and they believed their health declined as a result

- More than 45% of consumers aged 18–64 have used digital-first care instead of their regular provider, and 92% of them believe the quality is equal or better

This sentiment should be a wakeup call for leaders. It clearly signals that consumers expect healthcare to meet both foundational needs (cost, access) and lifestyle standards (convenience, personalization, digital ease). When systems fail to deliver, patients disengage. And when caregivers—who often manage care for entire households—encounter barriers, the ripple effect is exponential.

To build resilience that drives retention and revenue, leaders must design systems that anticipate needs and remove barriers before they impact care. Resilient operations must therefore be designed to:

- Reduce friction across the care journey, especially in scheduling and follow-up

- Support caregivers with multi-profile tools, shared access, and streamlined coordination

- Enable digital-first engagement that mirrors the ease of consumer platforms like Amazon and Uber

Consumers are blending survival needs with lifestyle demands. Intelligent healthcare organizations address both simultaneously.

Resilience also means preparing for the unexpected. Whether it’s regulatory shifts, staffing shortages, or competitive disruption, IHOs must be able to pivot quickly. That requires leaders to reimagine patient (and member) access as a strategic lever and prioritize digital transformation that eases the path to care.

3. Unified Innovation: Aligning Strategy, Tech, and Teams

Innovation without enterprise alignment is just noise—activity without impact. When digital initiatives are disconnected from business strategy, consumer needs, or operational realities, they create confusion, dilute resources, and fail to deliver meaningful outcomes. Fragmented innovation may look impressive in isolation, but without coordination, it lacks the momentum to drive true transformation.

To deliver real results, healthcare leaders must connect strategy, execution, and change readiness. In Forrester’s report, a quote from an interview with Priyal Patel emphasizes the importance of a shared strategic vision:

“Today’s decisions should be guided by long-term thinking, envisioning your organization’s business needs five to 10 years into the future.” — Priyal Patel, Director, Perficient

Our approach begins with strategic clarity. Using our Envision Framework, we help healthcare organizations rapidly identify opportunities, define a consumer-centric vision, and develop a prioritized roadmap that aligns with business goals and stakeholder expectations. This framework blends real-world insights with pragmatic planning, ensuring that innovation is both visionary and executable.

We also recognize that transformation is not just technical—it’s human. Organizational change management (OCM) ensures that teams are ready, willing, and able to adopt new ways of working. Through structured engagement, training, and sustainment, we help clients navigate the behavioral shifts required to scale innovation across departments and disciplines.

This strategic rigor is especially critical in healthcare, where innovation must be resilient, compliant, and deeply empathetic. As highlighted in our 2025 Digital Healthcare Trends report, successful organizations are those that align innovation with measurable business outcomes, ethical AI adoption, and consumer trust.

Perficient’s strategy and transformation services connect vision to execution, ensuring that innovation is sustainable:

- Digital Business Transformation: End-to-end strategies to modernize capabilities, experiences, and platforms

- Consumer Experience Strategy: Designing journeys that build loyalty and advocacy

- Product Strategy and Innovation: Defining digital products that meet emerging expectations

- Digital Operating Models: Structuring teams and systems to deliver at scale

- Change Management Enablement: Preparing people to embrace and sustain transformation

We partner with healthcare leaders to identify friction points and quick wins, build a culture of continuous improvement, and empower change agents across the enterprise.

You May Enjoy: Driving Company Growth With a Product-Driven Mindset

4. Speed With Purpose and Strategic Precision

The ability to pivot, scale, and deliver quickly is becoming a defining trait of tomorrow’s healthcare leaders. The way forward requires a comprehensive digital strategy that builds the capabilities, agility, and alignment to stay ahead of evolving demands and deliver meaningful impact.

IHOs act quickly without sacrificing quality. But speed alone isn’t enough. Perficient’s strategic position emphasizes speed with purpose—where every acceleration is grounded in business value, ethical AI adoption, and measurable health outcomes.

Our experts help healthcare organizations move fast by:

- Designing for agility with composable architectures, MVP+ delivery models, and intelligent automation that compliantly accelerates time-to-value while maintaining clinical integrity

- Aligning digital transformation with value-based care goals, enabling organizations to shift from volume to value through strategic investments that integrate cloud platforms, interoperable systems, and advanced analytics

- Operationalizing AI responsibly to support equitable, secure, compliant, and scalable AI adoption

This approach supports the Quintuple Aim: better outcomes, lower costs, improved experiences, clinician well-being, and health equity. It also ensures that innovation is not just fast. It’s focused, ethical, and sustainable.

Speed with purpose means:

- Rapid prototyping that validates ideas before scaling

- Real-time data visibility to inform decisions and interventions

- Cross-functional collaboration that breaks down silos and accelerates execution

- Outcome-driven KPIs that measure impact, not just activity

Healthcare leaders don’t need more tools. They need a strategy that connects business imperatives, consumer demands, and an empowered workforce to drive transformation forward. Perficient equips organizations to move with confidence, clarity, and control.

Collaborating to Build Intelligent Healthcare Organizations

We believe our inclusion in Forrester’s report underscores our role as a trusted advisor in intelligent healthcare transformation. From insight to impact, our healthcare expertise equips leaders to modernize, personalize, and scale care. We drive resilient, AI-powered transformation to shape the experiences and engagement of healthcare consumers, streamline operations, and improve the cost, quality, and equity of care.

We have been trusted by the 10 largest health systems and the 10 largest health insurers in the U.S., and Modern Healthcare consistently ranks us as one of the largest healthcare consulting firms.

Our strategic partnerships with industry-leading technology innovators—including AWS, Microsoft, Salesforce, Adobe, and more—accelerate healthcare organizations’ ability to modernize infrastructure, integrate data, and deliver intelligent experiences. Together, we shatter boundaries so you have the AI-native solutions you need to boldly advance business.

Ready to advance your journey as an intelligent healthcare organization?

We’re here to help you move beyond disconnected systems and toward a unified, data-driven future—one that delivers better experiences for patients, caregivers, and communities. Let’s connect and explore how you can lead with empathy, intelligence, and impact.

]]>Migrating a website or upgrading to a new Sitecore platform is more than a technical lift — it’s a business transformation and an opportunity to align your site and platform with your business goals and take full advantage of Sitecore’s capabilities. A good migration protects functionality, reduces risk, and creates an opportunity to improve user experience, operational efficiency, and measurable business outcomes.

Before jumping to the newest version or the most hyped architecture, pause and assess. Start with a thorough discovery: review current architecture, understand what kind of migration is required, and decide what can realistically be reused versus what should be refactored or rebuilt, along with suitable topology and Sitecore products.

This blog expands the key considerations before committing to a Sitecore-specific migration, translating them into detailed, actionable architecture decisions and migration patterns that guide impactful implementation.

1) Clarifying client requirements

Before starting any Sitecore migration or implementation, it’s crucial to clarify client’s requirements thoroughly. This ensures the solution aligns with actual business needs, not just technical requests and helps avoid rework or misaligned outcomes.

Scope goes beyond just features: Don’t settle for “migrate this” as the requirement. Ask deeper questions to shape the right migration strategy:

- Business goals: Is the aim a redesign, conversion uplift, version upgrade, multi-region rollout, or compliance?

- Functional scope: Are we redesigning the entire site or specific flows like checkout/login, or making back office changes?

- Non-functional needs: What are the performance SLAs, uptime expectations, compliance (e.g.: PCI/GDPR), and accessibility standards?

- Timeline: Is a phased rollout preferred, or a big-bang launch?

Requirements can vary widely, from full redesigns using Sitecore MVC or headless (JSS/Next.js), to performance tuning (caching, CDN, media optimization), security enhancements (role-based access, secure publishing), or integrating new business flows into Sitecore workflows.

Sometimes, the client may not fully know what’s needed, it’s up to us to assess the current setup and recommend improvements. Don’t assume the ask equals the need, A full rewrite isn’t always the best path. A focused pilot or proof of value can deliver better outcomes and helps validate the direction before scaling.

2) Architecture of the client’s system

Migration complexity varies significantly based on what the client is currently using. You need to evaluate current system and its uses and reusability.

Key Considerations

- If the client is already on Sitecore, the version matters. Older versions may require reworking the content model, templates, and custom code to align with modern Sitecore architecture (e.g.: SXA, JSS).

- If the client is not on Sitecore, evaluate their current system, infrastructure, and architecture. Identify what can be reused—such as existing servers(in case of on-prem), services, or integrations—to reduce effort.

- Legacy systems often include deprecated APIs, outdated connectors, or unsupported modules, which increase technical risk and require reengineering.

- Historical content, such as outdated media, excessive versioning, or unused templates, can bloat the migration. It’s important to assess what should be migrated, cleaned, or archived.

- Map out all customizations, third-party integrations, and deprecated modules to estimate the true scope, effort, and risk involved.

- Understanding the current system’s age, architecture, and dependencies is essential for planning a realistic and efficient migration path.

3) Media Strategy

When planning a Sitecore migration or upgrade, media handling can lead to major performance issues post-launch. These areas are critical for user experience, scalability, and operational efficiency, so they need attention early in the planning phase. Digital Asset Management (DAM) determines how assets are stored, delivered, and governed.

Key Considerations

- Inventory: Assess media size, formats, CDN references, metadata, and duplicates. Identify unused assets, and plat to adopt modern formats (e.g., WebP).

- Storage Decisions: Analyze and decide whether assets stay in Sitecore Media Library, move to Content Hub, or use other cloud storage (Azure Blob, S3)?

- Reference Updates: Plan for content reference updates to avoid broken links.

4) Analytics, personalization, A/B testing, and forms

These features often carry stateful data and behavioral dependencies that can easily break during migration if not planned for. Ignoring them can lead to data loss and degraded user experience.

Key Considerations

- Analytics: Check if xDB, Google Analytics, or other trackers are in use? Decide how historical analytics data will be preserved, validated, and integrated into the new environment?

- Personalization: Confirm use of Sitecore rules, xConnect collections, or an external personalization engine. Plan to migrate segments, conditions, and audience definitions accurately.

- A/B Testing & Experiments: Draft a plan to export experiment definitions and results is present.

- Forms: Analyze which forms collects data, and how do they integrate with CRM or marketing automation?

Above considerations play important role in choosing Sitecore topology, if there is vast use of analytics XP makes a suitable option, forms submission consent flows have different approach in different topologies.

5) Search Strategy

Search is critical for user experience, and a migration is the right time to reassess whether your current search approach still makes sense.

Key Considerations

- Understand how users interact with the site, Is search a primary navigation tool or a secondary feature? Does it significantly impact conversion or engagement?

- Identify current search engine if any. Access its features, if advanced capabilities like AI recommendations, synonyms, or personalization being used effectively.

- If the current engine is underutilized, note that maintaining it may add unnecessary cost and complexity. If search is business-critical, ensure feature parity or enhancement in the new architecture.

- Future Alignment: Based on requirements, determine whether the roadmap supports:

-

- Sitecore Search (SaaS) for composable and cloud-first strategies.

- Solr for on-prem or PaaS environments.

- Third-party engines for enterprise-wide search needs.

6) Integrations, APIs & Data Flows

Integrations are often the hidden complexity in Sitecore migrations. They connect critical business systems, and any disruption can lead to post-go-live incidents. For small, simple content-based sites with no integrations, migrations tend to be quick and straightforward. However, for more complex environments, it’s essential to analyze all layers of the architecture to understand where and how data flows. This includes:

Key Considerations

- Integration Inventory: List all synchronous and asynchronous integrations, including APIs, webhooks, and data pipelines. Some integrations may rely on deprecated endpoints or legacy SDKs that need refactoring.

- Criticality & Dependencies: Identify mission-critical integrations (e.g.: CRM, ERP, payment gateways).

- Batch & Scheduled Jobs: Audit long-running processes, scheduled exports, and batch jobs. Migration may require re-scheduling or re-platforming these jobs.

- Security & Compliance: Validate API authentication, token lifecycles, and data encryption. Moving to SaaS or composable may require new security patterns.

7) Identify Which Sitecore offerings are in use — and to what extent?

Before migration, it’s essential to document the current Sitecore ecosystem and evaluate what the future state should look like. This determines whether the path is a straight upgrade or a transition to a composable stack.

Key Considerations

- Current Topology: Is the solution running on XP or XM? Assume that XP features (xDB, personalization) may not be needed if moving to composable.

- Content Hub: Check if DAM or CMP is in use. If not, consider whether DAM is required for centralized asset management, brand consistency, and omnichannel delivery.

- Sitecore Personalize & CDP: Assess if personalization is currently rule-based or if advanced testing and segmentation are required.

- OrderCloud: If commerce capabilities exist today or are planned in the near future.

Target Topologies

This is one of the most critical decisions is choosing the target architecture. This choice impacts infrastructure, licensing, compliance, authoring experience, and long-term scalability. It’s not just a technical decision—it’s a business decision that shapes your future operating model.

Key Considerations

- Business Needs & Compliance: Does your organization require on-prem hosting for regulatory reasons, or can you move to SaaS for agility?

- Authoring Experience: Will content authors need Experience Editor, or is a headless-first approach acceptable?

- Operational Overhead: How much infrastructure management can team handle post-migration?

- Integration Landscape: Are there tight integrations with legacy systems that require full control over infrastructure?

Architecture Options & Assumptions

| Option | Best For | Pros | Cons | Assumptions |

| XM (on-prem/PaaS) | CMS-only needs, multilingual content, custom integrations | Visual authoring via Experience Editor

Hosting control |

Limited marketing features | Teams want hosting flexibility and basic CMS capabilities but analytics is not needed |

| Classic XP (on-prem/PaaS) | Advanced personalization, xDB, marketing automation | Full control

Deep analytics Advanced marketing Personalization |

Complex infrastructure, high resource demand | Marketing features are critical; infra-heavy setup is acceptable |

| XM Cloud (SaaS) | Agility, fast time-to-market, composable DXP | Reduced overhead

Automatic updates Headless-ready |

Limited low-level customization | SaaS regions meet compliance, Needs easy upgrades |

Along with topology its important to consider hosting and frontend delivery platform. Lets look at available hosting options with their pros and cons:

- On-Prem(XM/XP): You can build the type of machine that you want.

- Pros: Maximum control, full compliance for regulated industries, and ability to integrate with legacy systems.

- Cons: High infrastructure cost, slower innovation, and manual upgrades, difficult to scale.

- Best For: Organizations with strict data residency, air-gapped environments, or regulatory mandates.

- Future roadmap may require migration to cloud, so plan for portability.

- PaaS (Azure App Services, Managed Cloud – XM/XP)

- Pros: Minimal up-front costs and you do not need to be concerned about the maintenance of the underlying machine.

- Cons: Limited choice of computing options and functionality.

- Best For: Organizations expecting to scale vertically and horizontally, often and quickly

- IaaS (Infrastructure as a service – XM/XP)

- This is same as on-premise, but with VMs you can tailor servers to meet your exact requirements.

- SaaS (XM Cloud)

- Pros: Zero infrastructure overhead, automatic upgrades, global scalability.

- Cons: Limited deep customization at infra level.

- Best For: Organizations aiming for composable DXP and agility.

- Fully managed by Sitecore (SaaS).

For development, you have different options for example: .Net MVC, .Net Core, Next JS, React. Depending on topology suggested, selection of frontend delivery can be hybrid or headless:

.NET MVC → For traditional, web-only application.

Headless → For multi-channel, composable, SaaS-first strategy.

.NET Core Rendering → For hybrid modernization with .NET.

8) Security, Compliance & Data Residency

Security is non-negotiable during any Sitecore migration or upgrade. These factors influence architecture, hosting choices and operational processes.

Key Considerations

- Authentication & Access: Validate SSO, SAML/OAuth configurations, API security, and secrets management. Assume that identity providers or token lifecycles may need reconfiguration in the new environment.

- Compliance Requirements: Confirm obligations like PCI, HIPAA, GDPR, Accessibility and regional privacy laws. Assume these will impact data storage, encryption, and as AI is in picture now a days it will even have impact on development workflow.

- Security Testing: Plan for automated vulnerability scans(decide tools you going to use for the scans) and manual penetration testing as part of discovery and pre go-live validation.

9) Performance

A migration is the perfect opportunity to identify and fix structural performance bottlenecks, but only if you know your starting point. Without a baseline, it’s impossible to measure improvement or detect regressions.

Key Considerations

- Baseline Metrics: Capture current performance indicators like TTFB (Time to First Byte), LCP (Largest Contentful Paint), CLS (Cumulative Layout Shift), throughput, and error rates. These metrics will guide post-migration validation and SLA commitments.

- Caching & Delivery: Document existing caching strategies, CDN usage, and image delivery methods. Current caching patterns may need reconfiguration in the new architecture.

- Load & Stress Testing: Define peak traffic scenarios and plan load testing tools with Concurrent Users and Requests per Second.

10) Migration Strategies

Choosing the right migration strategy is critical to balance risk, cost, and business continuity. There’s no one size fits all approach—your suggestion/choice depends on timeline, technical debt and operational constraints.

Common Approaches

-

- Lift & Shift

Move the existing solution as is with minimal changes.

This is low-risk migrations where speed is the priority. When the current solution is stable and technical debt is manageable.

However with this approach the existing issues and inefficiencies stays as is which can be harmful.

- Lift & Shift

-

- Phased (Module-by-Module)

Migrate critical areas first (e.g.: product pages, checkout) and roll out iteratively.

This can be opted for large, complex sites where risk needs to be minimized, when business continuity is critical.

With this approach, timelines are longer and requires dual maintenance during transition.

- Phased (Module-by-Module)

-

- Rewrite & Cutover

Rebuild the solution from scratch and switch over at once.

This is can be chosen when the current system doesn’t align with future architecture. When business wants a clean slate for modernization.

- Rewrite & Cutover

Above options can be suggested based on several factors whether business tolerate downtime or dual maintenance. What are the Timelines, What’s the budget. If the current solution worth preserving, or is a rewrite inevitable? Does the strategy align with future goals?

Final Thoughts

Migrating to Sitecore is a strategic move that can unlock powerful capabilities for content management, personalization, and scalability. However, success lies in the preparation. By carefully evaluating your current architecture, integration needs and team readiness, you can avoid common pitfalls and ensure a smoother transition. Taking the time to plan thoroughly today will save time, cost, and effort tomorrow setting the stage for a future-proof digital experience platform.

]]>

The recent Forrester Customer Experience Summit in Nashville was a powerful reminder that customer experience (CX) is no longer a standalone function, it’s the connective tissue of modern business. From keynote sessions to hands-on workshops, one message resonated loud and clear: sustainable growth belongs to organizations that align brand, technology, and empathy to deliver experiences that are both meaningful and measurable.

One of the most compelling themes to emerge was the rise of Total Experience (TX)—a strategic fusion of brand, customer, employee, and digital experience. TX isn’t about doing everything; it’s about doing what matters most and doing it exceptionally well. It’s a shift from fragmented initiatives to a unified approach that drives both acquisition and retention.

What sets TX leaders apart? They understand what truly matters to their customers—and they act on it. According to Forrester, only a small fraction of businesses have a deep, actionable understanding of customer priorities. Yet those that do are pulling ahead, creating differentiated value and building loyalty in ways that competitors struggle to replicate.

Throughout the summit, themes like AI-powered personalization, intelligent creativity, and outcome-based design surfaced repeatedly. But these innovations were always grounded in human insight. Whether it was rethinking how we fund innovation, mapping journeys that move people, or building intelligent experiences that act with empathy, the focus was on creating value that feels personal, purposeful, and scalable.

For senior leaders, the implications are clear: TX is not a trend—it’s a transformation. It requires breaking down silos, investing in cross-functional collaboration, and embedding empathy into every touchpoint. It’s about designing experiences that not only meet expectations but elevate them.

If your organization isn’t currently presenting a Total Experience strategy to your customers, now is the time to assess the health of your business. Are your brand and CX teams aligned? Are your digital investments driving emotional connection as well as operational efficiency? Are you measuring what matters?

Our team of experts can help you evaluate your current experience strategy and identify opportunities to create more connected, customer-centric growth.

]]>Perficient has officially announced a groundbreaking partnership with WRITER, the leader in agentic AI for the enterprise. This 360-degree collaboration marks a pivotal moment in our AI-first journey, combining WRITER’s powerful end-to-end agent platform with Perficient’s deep consulting expertise to deliver scalable, secure, and transformative AI solutions to the Global 2000.

To explore the significance of this partnership, I sat down with Bill Davis, Perficient’s Senior Vice President and Head of Partners and Ecosystem, to discuss what this means for our clients, our colleagues, and the future of enterprise AI.

Connor Stieferman: Bill, what makes this partnership with WRITER so significant for Perficient and the broader market?

Bill Davis: This partnership represents a major milestone not just for Perficient and WRITER, but for the enterprise AI landscape as a whole. WRITER is gaining serious momentum in the market, and their agentic AI platform is redefining how organizations think about productivity, automation, and intelligence at scale. By combining WRITER’s cutting-edge technology with Perficient’s deep industry expertise and global implementation capabilities, we’re creating a force multiplier for enterprise transformation. Together, we’re enabling organizations to move beyond isolated AI experiments and into scalable, secure, and measurable deployments.

To put it simply, this partnership sets a new standard for how AI can be adopted and operationalized across industries.

Connor: What makes Perficient a strong partner for a company like WRITER?

Bill: WRITER is leading the way in agentic AI, and companies at that level need partners who can match their pace and deliver enterprise-grade execution. Perficient brings deep industry expertise, a global delivery model, and a strong track record of helping large organizations adopt emerging technologies at scale. We understand how to translate innovation into business outcomes with speed and precision. We also add a critical strategic layer, helping clients identify where agentic AI can drive the most value, designing tailored solutions, and ensuring successful adoption. By jointly going to market with WRITER, we’re co-developing best-in-class, industry-specific agentic solutions that deliver real outcomes for enterprise customers.

Connor: How does this partnership reflect Perficient’s AI-first strategy?

Bill: Our AI-first strategy is about embedding intelligence into everything we do — from internal operations to client solutions. By broadly deploying WRITER agents across our own enterprise, we’re demonstrating a top-down and bottom-up commitment to transformation. We’re not just advising clients on AI; we’re living it. This partnership allows us to build and deploy custom agents that automate our own workflows, generate contextual content, and deliver insights, showcasing what’s possible when AI is fully integrated into a business.

Connor: What’s the significance of WRITER being Perficient’s first 360-degree partner?

Bill: It’s a testament to the depth of our collaboration. Our relationship extends well beyond jointly going to market together. Each organization is deeply committed to the other’s success. The alignment extends across our executive, sales, marketing, and technology teams and reflects the strength of our shared vision. This level of partnership is rare — and it positions us to lead the market in agentic AI adoption.

Connor: What kind of value can clients expect from this collaboration?

Bill: Clients will see accelerated time-to-value through rapid deployment of tailored AI agents. They’ll benefit from embedded intelligence that integrates seamlessly with their existing systems, along with strategic guidance from our AI experts to ensure adoption and ROI. Plus, WRITER’s platform offers enterprise-grade security and governance, which is critical for large-scale deployments. Together, we’re helping clients cut through the noise and focus on fast, secure outcomes.

Connor: What excites you most about what’s ahead?

Bill: Honestly, it’s the opportunity to help our clients become agent builders themselves. We’re not just delivering tools — we’re enabling transformation. And we’re doing it alongside the exceptional team at WRITER. They’re agile, collaborative, and deeply committed to moving fast and getting things done right. Partnerships work best when both sides are aligned, and WRITER brings the same obsession over client outcomes that we value at Perficient. Together, we’re empowering organizations to reinvent how they work, innovate, and grow. The future of enterprise AI is agentic, and Perficient and WRITER are at the forefront of making that future real.

Final Thoughts

Perficient’s partnership with WRITER is a bold step forward in our mission to transform enterprises through AI. By combining cutting-edge technology with deep consulting expertise, we’re helping clients unlock the full potential of agentic AI.

Stay tuned for more updates as we roll out new solutions, launch innovation labs, and continue to lead the way in enterprise AI transformation.

]]>Modern Healthcare has once again recognized Perficient among the largest healthcare management consulting firms in the U.S., ranking us ninth in its 2025 survey. This honor reflects not only our growth but also our commitment to helping healthcare leaders navigate complexity with clarity, precision, and purpose.

What’s Driving Demand: Innovation with Intent

As provider, payer, and MedTech organizations face mounting pressure to modernize, our work is increasingly focused on connecting digital investments to measurable business and health outcomes. The challenges are real—and so are the opportunities.

Healthcare leaders are engaging our experts to tackle shifts from digital experimentation to enterprise alignment in business-critical areas, including:

- Digital health transformation that eases access to care.

- AI and data analytics that accelerate insight, guide clinical decisions, and personalize consumer experiences.

- Workforce optimization that supports clinicians, streamlines operations, and restores time to focus on patients, members, brokers, and care teams.

These investments represent strategic maturity that reshapes how care is delivered, experienced, and sustained.

Operational Challenges: Strategy Meets Reality

Serving healthcare clients means working inside a system that resists simplicity. Our industry, technical, and change management experts help leaders address three persistent tensions:

- Aligning digital strategy with enterprise goals. Innovation often lacks a shared compass. We translate divergent priorities—clinical, operational, financial—into unified programs that drive outcomes.

- Controlling costs while preserving agility. Budgets are tight, but the need for speed and competitive relevancy remains. Our approach favors scalable roadmaps and solutions that deliver early wins and can flex as the health care marketplace and consumer expectations evolve.

- Preparing the enterprise for AI. Many of our clients have discovered that their AI readiness lags behind ambition. We help build the data foundations, governance frameworks, and workforce capabilities needed to operationalize intelligent systems.

Related Insights: Explore the Digital Trends in Healthcare

Consumer Expectations: Access Is the New Loyalty

Our Access to Care research, based on insights from more than 1,000 U.S. healthcare consumers, reveals a fundamental shift: if your healthcare organization isn’t delivering a seamless, personalized, and convenient experience, consumers will go elsewhere. And they won’t always come back.

Many healthcare leaders still view competition as other hospitals or clinics in their region. But today’s consumer has more options—and they’re exercising them. From digital-first health experiences to hyper-local disruptors and retail-style health providers focused on accessibility and immediacy, the competitive field is rapidly expanding.

- Digital convenience is now a baseline. More than half of consumers who encountered friction while scheduling care went elsewhere.

- Caregivers are underserved. One in three respondents manage care for a loved one, yet most digital strategies treat the patient as a single user.

- Digital-first care is mainstream. 45% of respondents aged 18–64 have already used direct-to-consumer digital care, and 92% of those adopters believe the quality is equal or better to the care offered by their regular health care system.

These behaviors demand a rethinking of access, engagement, and loyalty. We help clients build experiences that are intuitive, inclusive, and aligned with how people actually live and seek care.

Looking Ahead: Complexity Accelerates

With intensified focus on modernization, data strategy, and responsible AI, healthcare leaders are asking harder questions. We’re helping them find and activate answers that deliver value now and build resilience for what’s next.

Our technology partnerships with Adobe, AWS, Microsoft, Salesforce, and other platform leaders allow us to move quickly, integrate deeply, and co-innovate with confidence. We bring cross-industry expertise from financial services, retail, and manufacturing—sectors where personalization and operational excellence are already table stakes. That perspective helps healthcare clients leapfrog legacy thinking and adopt proven strategies. And our fluency in HIPAA, HITRUST, and healthcare data governance ensures that our digital solutions are compliant, resilient, and future-ready.

Optimized, Agile Strategy and Outcomes for Health Insurers, Providers, and MedTech

Discover why we been trusted by the 10 largest U.S. health systems, 10 largest U.S. health insurers, and 14 of the 20 largest medical device firms. We are recognized in analyst reports and regularly awarded for our excellence in solution innovation, industry expertise, and being a great place to work.

Contact us to explore how we can help you forge a resilient, impactful future that delivers better experiences for patients, caregivers, and communities.

]]>On the hundredth anniversary of its circulation, Automotive News launched a series on the technological innovations driving the shift to “software-defined vehicles” (SDVs). The articles explore the fragmented U.S. roadmap to software integration, intensifying global competition, OEM progress to date, and the uncertainties shaping automakers’ strategies.

This coverage signals an evolution. As automakers race to redefine themselves as technology companies, the industry is grappling with how software will reshape value, competition, and consumer trust.

Perficient’s Connected Products Research Provides Clarity

At Perficient, our latest connected products research “Build a Powerful Connected Products Strategy” adds a critical layer of data to the SDV conversation: distinguishing not what automakers think matters most — but what consumers actually care about.

“OEMs are making strategic bets on software-defined vehicles, but consumers are weighing very different factors when making purchase decisions. The gap between those perspectives is significant — and closing it is critical,” said Justin Huckins, Director, Strategy, Automotive Industry Lead.

This difference matters. OEMs navigating still-undefined SDV pathways need data-based consumer signals to guide product architecture, user experience, data governance, and connected services. Otherwise, they risk widening the gap between industry emphasis and end-user expectations.

Four Key Insights: Where Automakers and Consumers Diverge

Our research gathered perspectives from 1,307 respondents including consumers, OEMs, and manufacturers, surfacing a series of misalignments:

- Trust is the top consumer priority, but not for manufacturers. Only 22% of manufacturers flagged “trust” as a critical factor in adoption — yet consumers consistently rank it #1.

- Consumers don’t feel informed about data collection. Despite widespread anxiety about data privacy, only 19% of consumers feel fully aware of what their vehicles are collecting.

- Experience outweighs engineering. “Consumers are more worried about the experience than the engineering. OEMs often enter the connected space for internal reasons — field data, R&D — but buyers expect tangible value in everyday use,” said Kevin Espinosa, Director, Strategy, Manufacturing Industry Lead.

- Integration matters. 38% of consumers highlighted integration with other connected devices as a key feature. This points to expectations of seamless cross-device ecosystems — from vehicles to homes to mobile apps.

Why this Research Matters in the Race to Software Defined Vehicles

- Trust as a foundation: Software-defined vehicles amplify consumer concerns around data handling, privacy, and transparency. “It’s alarming that manufacturers and their customers don’t value trust in the same way,” said Jim Hertzfeld, Area Vice President. Trust is no longer a secondary issue; it’s the foundation for adoption.

- The global race, China’s edge & collaboration: As Automotive News highlights, China’s accelerated SDV progress creates global pressure. Collaborative development models will only succeed if OEMs align around consumer trust and clarity.

- Roadmap clarity through consumer signals: With the SDV roadmap still fuzzy, consumer insights provide directional clarity on where to invest — from UX and subscriptions to data governance and operating models. “OEMs are looking to monetize, but customers are looking for better experiences. Whoever reconciles that disconnect first will lead the next wave of SDVs,” added Justin.

Shaping SDV Strategies Around What Buyers Value

As the SDV era accelerates — with new architectures, shared development models, and software updates over the air — aligning strategy with consumer expectations around transparency and privacy will be just as important as the technology itself.

Leveraging our position as the global AI-first consultancy, Perficient partners with Fortune 2000 companies across industries—healthcare, financial services, manufacturing, and more—to digitize operations, modernize with cloud and AI, and unlock insights from connected data platforms. That cross-industry vantage point matters, because there are now more connected products in the world than people, and vehicles are becoming one of the most important nodes in this ecosystem.

If you’re exploring how SDV strategies can be shaped by what buyers truly value, our research offers a tangible perspective. Download Build a Powerful Connected Products Strategy or schedule an interview with our industry leaders to discuss how consumer insights can inform your software defined vehicle strategy.

]]>The move to SaaS is one of the biggest shifts happening in digital experience. It’s not just about technology, it’s about making platforms simpler, faster, and more adaptable to the pace of customer expectations.

Sitecore has leaned in with a clear vision: “It’s SaaS. It’s Simple. It’s Sitecore.”

Here are five reasons why more organizations are turning to Sitecore SaaS to power their digital experience strategies:

1. Simplicity: A Modern Foundation

Sitecore SaaS solutions like XM Cloud remove the burden of managing infrastructure and upgrades.

- No more complex version upgrades, updates happen automatically.

- Reduced reliance on IT for day-to-day maintenance.

- A leaner, more cost-effective foundation for marketing teams.

By simplifying operations, companies can focus on what matters most; delivering exceptional digital experiences.

2. Speed-to-Value: Launch Faster

Traditional DXPs can take months (or more) to implement and optimize. Sitecore SaaS is designed for speed:

- Faster deployments with prebuilt components.

- Seamless integrations with other SaaS and cloud tools.

- Empowerment for marketers to build and launch campaigns without heavy dev cycles.

Organizations adopting Sitecore SaaS are moving from planning to execution faster than ever.

3. Scalability: Grow Without Rebuilds

As customer expectations grow, so does the need to scale digital experiences quickly. Sitecore SaaS allows companies to:

- Spin up new sites, regions, or languages without starting from scratch.

- Adjust to spikes in demand without disruption.

- Add capabilities as the business evolves — without heavy upfront investment.

This scalability ensures brands can adapt as fast as their audiences do.

4. Continuous Innovation: Always Current

One of the most frustrating parts of traditional platforms is the upgrade cycle. Sitecore SaaS solves this with:

- Automatic access to the latest innovations — no disruptive “big bang” upgrades.

- Built-in adoption of emerging technologies like AI and machine learning.

- A platform that’s always modern, not years behind.

With Sitecore SaaS, companies get a future-proof DXP that evolves with them.

5. Composability Without the Complexity

Composable DXPs promise flexibility, but without the right foundation they can feel overwhelming. Sitecore SaaS makes composability practical:

- Start with XM Cloud as a core CMS foundation.

- Add personalization, commerce, or search when ready.

- Use APIs to integrate best-of-breed tools, without losing control.

This approach ensures organizations adopt what they need, when they need it without the complexity of managing multiple disconnected systems.

Why it Matters

Companies aren’t moving to Sitecore SaaS just to keep up with technology. They’re moving because it makes their organizations more agile, efficient, and competitive. SaaS with Sitecore means simpler operations, faster launches, continuous innovation, and a platform that grows alongside your business.

]]>At Perficient Hyderabad, excellence goes beyond the desk – into every serve, every strategy and every smash. This year’s Sports Fest brought together sharp minds and swift reflexes in three exciting events: Chess, Table Tennis and Badminton Doubles.

From strategic battles to high-energy rallies, our players gave it their all. Let’s relive the highlights and honour those who stood out!

Spotlight on Our Champions: Winner & Runner-Ups

Here’s to the stars who rose to the challenge and made their mark. Their performance, passion and perseverance earned them well-deserved recognition.

To celebrate their dedication and competitive excellence, medals and certificates of achievement were presented to all winner and runner-up.

.

.  .

.

| Event | Winners | Runner-Up |

|---|---|---|

| Chess | Naga Sandeep Mullangi | Ramasani Sowmith |

| Table Tennis Men | Madan Kumar Mothkur | Hemanth Sreenu Neelam |

| Table Tennis Women | Naga Pujitha Mummidivarapu | Sandhya Rani Meesala |

| Badminton Doubles Men | Madan Kumar Mothkur, Sasi Pavan Darapureddy | Naga Sandeep Mullangi, Pramod Sagar Kolakani |

| Badminton Doubles Women | Sai Keerthana Anumandla, Adhira Sobha | Aadela Nishat, Hiba Naaz |

Each match has its own rhythm – some were slow burns of strategy, others were fast-paces thrillers. But across the board, one thing stayed constant: the drive to give it best and enjoy the game.

Beyond the Podium: The True Spirit of Play

While the medals were a highlight, the real victory was in the companionship, the cheers and the shared moments. From spontaneous coaching to friendly rivalries, the Sports Fest reminded us that play brings people together in the best ways.

Whether it was a calculated checkmate or last-minute smash, the spirit of play was alive in every match and every moment.

And let’s not forget the sidelines – where teammates turned into cheerleaders, advice flowed freely, and laughter echoed between matches. It wasn’t just about winning; it was about showing up, supporting each other and having fun.

Click to view slideshow.What’s Next: More Matches, More Memories

As we wrap up this season, we’re already excited for what’s ahead. More games, more gory, more chances to connect beyond the workplace.

To all our participants – Thank you for showing up, stepping up and playing with heart. You’ve made Perficient proud, and we can’t wait to see you in action again!

This Sports Fest proved once again that at Perficient Hyderabad, excellence goes beyond the desk and into every moment of play.

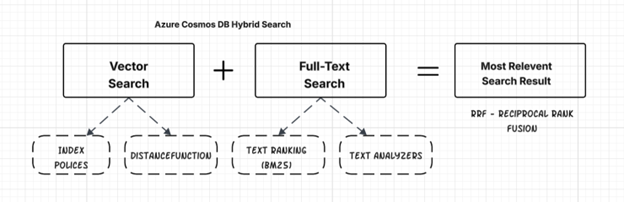

]]>Azure Cosmos DB for NoSQL now supports hybrid search, it is a powerful feature that combines full-text search and vector search to deliver highly relevant and accurate results. This blog post provides a comprehensive guide for developers and architects to understand, implement, and leverage hybrid search capabilities in their applications.

- What is hybrid search?

- How hybrid search works in Cosmos DB

- Vector embedding

- Implementing hybrid search

- Enable hybrid search.

- Container set-up and indexing

- Data Ingestion

- Search Queries

- Code Example

What is Hybrid Search?

Hybrid search is an advanced search technology that combines keyword search (also known as full-text search) and vector search to deliver more accurate and relevant search results. It leverages the strengths of both approaches to overcome the limitations of each when used in isolation.

Key Components

- Full-Text Search: This traditional method matches the words you type in, using techniques like stemming, lemmatization, and fuzzy matching to find relevant documents. It excels at finding exact matches and is efficient for structured queries with specific terms. Employs the BM25 algorithm to evaluate and rank the relevance of records based on keyword matching and text relevance.

- Vector Search: This method uses machine learning models to represent queries and documents as numerical embeddings in a multidimensional space, allowing the system to find items with similar characteristics and relationships, even if the exact keywords don’t match. Vector search is particularly useful for finding information that’s conceptually similar to the search query.

- Reciprocal Rank Fusion (RRF): This algorithm merges the results from both keyword and vector search, creating a single, unified ranked list of documents. RRF ensures that relevant results from both search types are fairly represented.

Hybrid search is suitable for various use cases, such as:

- Retrieval Augmented Generation (RAG) with LLMs

- Knowledge management systems: Enabling employees to efficiently find pertinent information within an enterprise knowledge base.

- Content Management: Efficiently search through articles, blogs, and documents.

- AI-powered chatbots

- E-commerce platforms: Helping customers find products based on descriptions, reviews, and other text attributes.

- Streaming services: Helping users find content based on specific titles or themes.

Let’s understand vector search and full-text search before diving into hybrid search implementation.

Understanding of Vector Search

Vector search in Azure Cosmos DB for NoSQL is a powerful feature that allows you to find similar items based on their semantic meaning, rather than relying on exact matches of keywords or specific values. It is a fundamental component for building AI applications, semantic search, recommendation engines, and more.

Here’s how vector search works in Cosmos DB:

Vector embeddings

Vector embeddings are numerical representations of data in a high-dimensional space, capturing their semantic meaning. In this space, semantically similar items are represented by vectors that are closer to each other. The dimensionality of these vectors can be quite large. We have separate topics in this blog on how to generate vector embedding.

Storing and indexing vectors

Azure Cosmos DB allows you to store vector embeddings directly within your documents. You define a vector policy for your container to specify the vector data’s path, data type, and dimensions. Cosmos DB supports various vector index types to optimize search performance, accuracy, and cost:

- Flat: Provides exact k-nearest neighbor (KNN) search.

- Quantized Flat: Offers exact search on compressed vectors.

- DiskANN: Enables highly scalable and accurate Approximate Nearest Neighbor (ANN) search.

Querying

- Azure Cosmos DB provides the VectorDistance() system function, which can be used within SQL queries to perform vector similarity searches as part of vector search.

Understanding Full-Text Search

Azure Cosmos DB for NoSQL now offers full-text search functionality (feature is in preview at this time for certain Azure regions), allowing you to perform powerful and efficient text-based searches within your documents directly in the database. This significantly enhances your application’s search capabilities without the need for an external search service for basic full-text needs.

Indexing

To enable full-text search, you need to define a full-text policy specifying the paths for searching and add a full-text index to your container’s indexing policy. Without the index, full-text searches would perform a full scan. Indexing involves tokenization, stemming, and stop word removal, creating a data structure like an inverted index for fast retrieval. Multi-language support (beyond English) and stop word removal are in early preview.

Querying

Cosmos DB provides system functions for full-text search in the NoSQL query language. These include FullTextContains, FullTextContainsAll, and FullTextContainsAny for filtering in the WHERE clause. The FullTextScore function uses the BM25 algorithm to rank documents by their relevance.

How Hybrid Search works in Cosmos DB

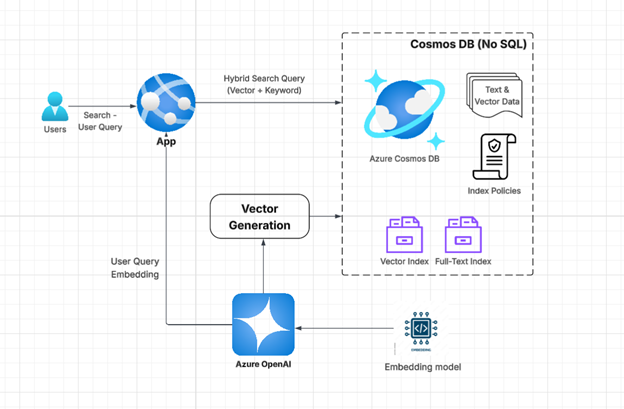

- Data Storage: Your documents in Cosmos DB include both text fields (for full-text search) and vector embedding fields (for vector search).

- Indexing:

- Full-Text Index: A full-text policy and index are configured on your text fields, enabling keyword-based searches.

- Vector Index: A vector policy and index are configured on your vector embedding fields, allowing for efficient similarity searches based on semantic meaning.

- Querying: A single query request is used to initiate hybrid search, including both full-text and vector search parameters.

- Parallel Execution: The vector and full-text search components run in parallel.

- VectorDistance() measures vector similarity.

- FullTextContains() or similar functions find keyword matches, and `FullTextScore()` ranks results using BM25.

- Result Fusion: The RRF function merges the rankings from both searches (vector & full text), creating a combined, ordered list based on overall relevance.

- Enhanced Results: The final results are highly relevant, leveraging both semantic understanding and keyword precision.

Vector Embedding

Vector embedding refers to the process of transforming data (like text, images) into a series of numbers, or a vector, that captures its semantic meaning. In this n-dimensional space, similar data points are mapped closer together, allowing computers to understand and analyze relationships that would be difficult with raw data.

To support hybrid search in Azure Cosmos DB, enhance the data by generating vector embeddings from searchable text fields. Store these embeddings in dedicated vector fields alongside the original content to enable both semantic and keyword-based queries.

Steps to generate embeddings with Azure OpenAI models

Provision Azure OpenAI Resource

- Sign in to the Azure portal: Go to https://portal.azure.com and log in.

- Create a resource: Select “Create a resource” from the Azure dashboard and search for “Azure OpenAI”.

Deploy Embedding Model

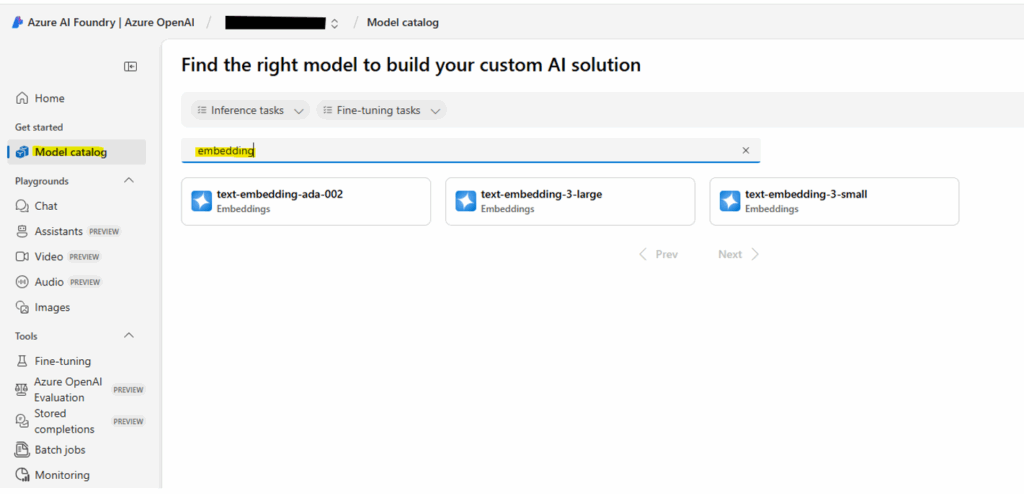

- Navigate to your newly created Azure OpenAI resource and click on “Explore Azure AI Foundry portal” in the overview page.

- Go to the model catalog and search for embedding models.

- Select embedding model:

- From the embedding model list, choose an embedding model like text-embedding-ada-002, text-embedding-3-large, or text-embedding-3-small.

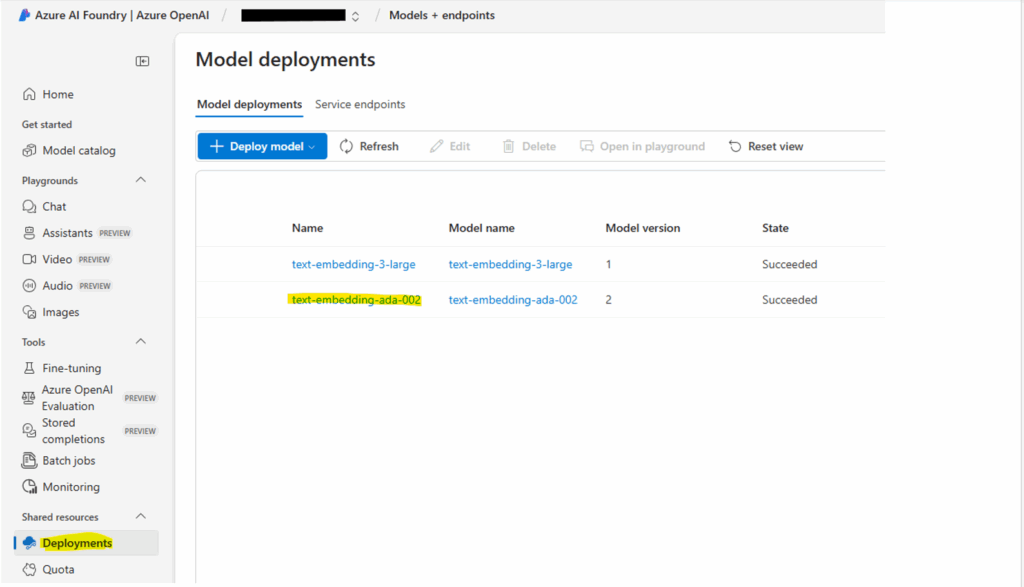

- Deployment name: Provide a unique name for your deployment. This name is crucial for accessing the model via the API.

- Deploy the model: Click “Create” to deploy the embedding model.

- After deployment, the resource will appear under the ‘Deployments’ section.

Accessing and utilizing embeddings

- Endpoint and API Key: After deployment, navigate to your Azure OpenAI resource and find the “Keys and Endpoint” under “Resource Management”. Copy these values as they are needed for authenticating API calls.

- Integration with applications: Use the Azure OpenAI SDK or REST APIs in your applications, referencing the deployment name and the retrieved endpoint and API key to generate embeddings.

Code example for .NET Core

Note: Ensure you have the .NET Core 8 SDK installed

using Azure;

using Azure.AI.OpenAI;

using System;

using System.Linq;

namespace AzureOpenAIAmbeddings

{

class Program

{

static async Task Main(string[] args)

{

// Set your Azure OpenAI endpoint and API key securely

string endpoint = Environment.GetEnvironmentVariable("AZURE_OPENAI_ENDPOINT") ?? "https://YOUR_RESOURCE_NAME.openai.azure.com/"; // Replace with OpenAI endpoint

string apiKey = Environment.GetEnvironmentVariable("AZURE_OPENAI_API_KEY") ?? "YOUR_API_KEY"; // Replace with OpenAI API key

// Create an AzureOpenAIAClient

var credentials = new AzureKeyCredential(apiKey);

var openaiClient = new OpenAIClient(new Uri(endpoint), credentials);

// Create embedding options

EmbeddingOptions embeddingOptions = new()

{

DeploymentName = "text-embedding-ada-002", // Replace with your deployment name

Input = { "Your text for generating embedding" }, // Text that require to generate embedding

};

// Generate embeddings

var returnValue = await openaiClient.GetEmbeddingsAsync(embeddingOptions);

//Store generated embedding data to Cosmos DB along with your text content

var embedding = returnValue.Value.Data[0].Embedding.ToArray()

}

}

}

Implementing Hybrid search

Implementing hybrid search in Azure Cosmos DB for NoSQL involves several key steps to combine the power of vector search and full-text search. This diagram illustrates the architecture of Hybrid Search in Azure Cosmos DB, leveraging Azure OpenAI for generating embedding, combining both vector-based and keyword-based search:

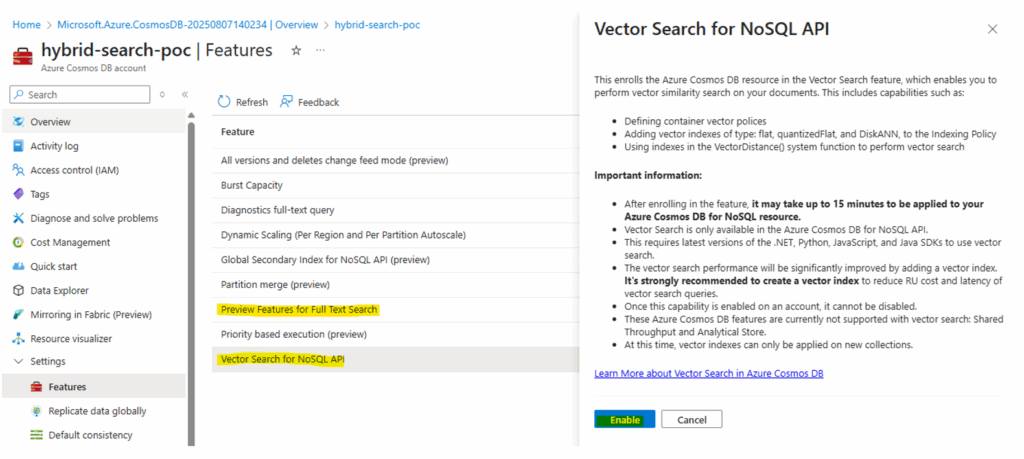

Step 1: Enable hybrid search in the Cosmos DB account

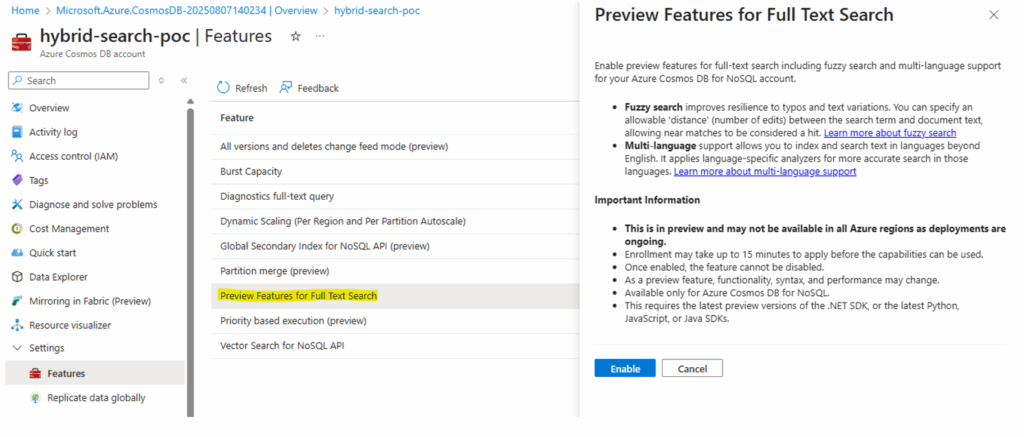

To implement hybrid search in Cosmos DB, begin by enabling both vector search and full-text search on the Azure Cosmos DB account.

- Navigate to Your Azure Cosmos DB for NoSQL Resource Page

-

Access the Features Pane:

- Select the “Features” pane under the “Settings” menu item.

-

Enable Vector Search:

- Locate and select the “Vector Search for NoSQL.” Read the description to understand the feature.

- Click “Enable” to activate vector indexing and search capabilities.

-

Enable Full-Text Search:

- Locate and select the “Preview Feature for Full-Text Search” (Full-Text Search for NoSQL API (preview)). Read the description to confirm your intention to enable it.

- Click “Enable” to activate full-text indexing and search capabilities.

Notes:

-

-

- Once these features are enabled, they cannot be disabled.

- Full Text Search (preview) may not be available in all regions at this time.

-

Step 2: Container Setup and Indexing

- Create a database and container or use an existing one.

- Note: Adding a vector index policy to an existing container may not be supported. If so, you will need to create a new container.

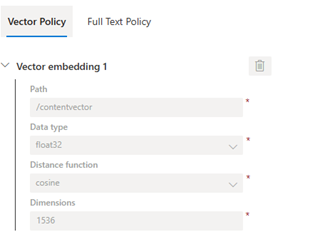

- Define the Vector embedding policy on the container

- You need to specify a vector embedding policy for the container during its creation. This policy defines how vectors are treated at the container level.

{ "vectorEmbeddings": [ { "path":"/contentvector", "dataType":"float32", "distanceFunction":"cosine", "dimensions":1536 }, }- Path: Specify the JSON path to your vector embedding field (e.g., /contentvector).

- Data type: Define the data type of the vector elements (e.g., float32).

- Dimensions: Specify the dimensionality of your vectors (e.g., 1536 for text-embedding-ada-002).

- Distance Function: Choose the distance metric for similarity calculation (e.g., cosine, dotProduct, or euclidean)

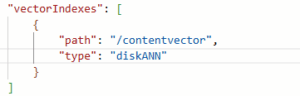

- Add Vector Index: Add a vector index to your container’s indexing policy. This enables efficient vector similarity searches.

-

-

- Path: Include the same vector path defined in your vector policy.

- Type: Select the appropriate index type (flat, quantizedFlat, or diskANN).

-

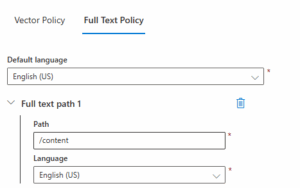

- Define Full-Text Policy: Define a container-level full-text policy. This policy specifies which paths in your documents contain the text content that you want to search.

-

-

- Path: Specify the JSON path to your text search field

- Language: content language

-

- Add Full-Text Index: Add a full-text index to the indexing policy, making full-text searches efficient

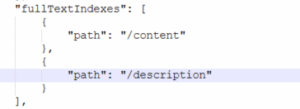

Hybrid search index (both Full-Text and Vector index)

{

"indexingMode": "consistent",

"automatic": true,

"includedPaths": [

{

"path": "/*"

}

],

"excludedPaths": [

{

"path": "/_etag*/?"

},

{

"path": "/contentvector/*"

}

],

"fullTextIndexes": [

{

"path": "/content"

},

{

"path": "/description"

}

],

"vectorIndexes": [

{

"path": "/contentvector",

"type": "diskANN"

}

]

}

Exclude the Vector Path:

- To optimize performance during data ingestion, you must add the vector path to the “excludedPaths” section of your indexing policy. This prevents the vector path from being indexed by the default range indexes, which can increase RU charges and latency.

Step 3: Data Ingestion

- Generate Vector Embeddings: For every document, convert the text content (and potentially other data like images) into numerical vector embeddings using an embedding model (e.g., from Azure OpenAI Service). This topic is covered above.

- Populate Documents: Insert documents into your container. Each document should have:

- The text content in the fields specified in your full-text policy (e.g., content, description).

- The corresponding vector embedding in the field is specified in your vector policy (e.g., /contentvector).

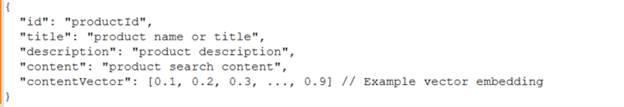

- Example document

Step 4: Search Queries

Hybrid search queries in Azure Cosmos DB for NoSQL combine the power of vector similarity search and full-text search within a single query using the Reciprocal Rank Fusion (RRF) function. This allows you to find documents that are both semantically similar and contain specific keywords.

SQL: SELECT TOP 10 * FROM c ORDER BY RANK RRF(VectorDistance(c.contentvector, @queryVector), FullTextScore(c.content, @searchKeywords))

VectorDistance(c. contentvector, @queryVector):

- VectorDistance(): This is a system function that calculates the similarity score between two vectors.

- @queryVector: This is a parameter representing the vector embedding of your search query. You would generate this vector embedding using the same embedding model used to create document vector embeddings.

- Return Value: Returns a similarity score based on the distance function defined in your vector policy (e.g., cosine, dot product, Euclidean).

FullTextScore(c.content, @searchKeywords):

- FullTextScore(): This is a system function that calculates a BM25 score, which evaluates the relevance of a document to a given set of search terms. This function relies on a full-text index on the specified path.

- @searchKeywords: This is a parameter representing the keywords or phrases you want to search for. You can provide multiple keywords separated by commas.

- Return Value: Returns a BM25 score, indicating the relevance of the document to the search terms. Higher scores mean greater relevance.

ORDER BY RANK RRF(…):

- RRF(…) (Reciprocal Rank Fusion): This is a system function that combines the ranked results from multiple scoring functions (like VectorDistance and FullTextScore) into a single, unified ranking. RRF ensures that documents that rank highly in either the vector search or the full-text search are prioritized in the final results.

Weighted hybrid search query:

SELECT TOP 10 * FROM c ORDER BY RANK RRF(VectorDistance(c.contentvector, @queryVector), FullTextScore(c.content, @searchKeywords), [2, 1]).

- Optional Weights: You can optionally provide an array of weights as the last argument to RRF to control the relative importance of each component score. For example, to weight the vector search twice as important as the full-text search, you could use RRF(VectorDistance(c.contentvector, @queryVector), FullTextScore(c.content, @searchKeywords), [2,1]).

Multi-field hybrid search query:

SELECT TOP 10 * FROM c ORDER BY RANK RRF(VectorDistance(c.contentvector, @queryVector),VectorDistance(c.imagevector, @queryVector),

FullTextScore(c.content, @searchKeywords, FullTextScore(c.description, @searchKeywords, [3,2,1,1]).

Code Example (.NET Core C#)

- Add Cosmos DB and OpenAI SDKs

- Get Cosmos DB connection string and create Cosmos DB client

- Get the OpenAI endpoint and key to create an OpenAI client

- Generate embedding for user query

- A hybrid search query to do a vector and keyword search

using Microsoft.Azure.Cosmos;

using System.Collections.Generic;

using System.Linq;

using System.Threading.Tasks;

namespace CosmosHybridSearch

{

public class Product

{

public string Id { get; set; }

public string Name { get; set; }

public float[] DescriptionVector { get; set; } // Your vector embedding property

}

public class Program

{

private static readonly string EndpointUri = "YOUR_COSMOS_DB_ENDPOINT";

private static readonly string PrimaryKey = "YOUR_COSMOS_DB_PRIMARY_KEY";

private static readonly string DatabaseId = "YourDatabaseId";

// Set your Azure OpenAI endpoint and API key securely.

string endpoint = Environment.GetEnvironmentVariable("AZURE_OPENAI_ENDPOINT") ?? "https://YOUR_RESOURCE_NAME.openai.azure.com/"; // Replace with your endpoint

string apiKey = Environment.GetEnvironmentVariable("AZURE_OPENAI_API_KEY") ?? "YOUR_API_KEY"; // Replace with your API key

public static async Task Main(string[] args)

{

using CosmosClient client = new(EndpointUri, PrimaryKey);

Database database = await client.CreateDatabaseIfNotExistsAsync(DatabaseId);

Container container = database.GetContainer(ContainerId);

// Create an AzureOpenAiEmbeddings instance - not online :)

var credentials = new ApiKeyServiceClientCredentials(apiKey);

AzureOpenAiEmbeddings openAiClient = new(endpoint, credentials);

// Example: search your actual query vector and search term.

float[] queryVector;

string searchTerm = "lamp";

EmbeddingOptions embeddingOptions = new()

{

DeploymentName = "text-embedding-ada-002", // Replace with your deployment name

Input = searchTerm,

};

var queryVectorResponse = await openAICient.GetEmbeddingsAsync(embeddingOptions);

queryVector = returnValue.Value.Data[0].Embedding.ToArray()

// Define the hybrid search query using KQL

QueryDefinition queryDefinition = new QueryDefinition(

"SELECT top 10 * " +

"FROM myindex " +

"ORDER BY _vectorScore(desc, @queryVector), FullTextScore(_description, @searchTerm)")

.WithParameter("@queryVector", queryVector)

.WithParameter("@searchTerm", searchTerm);

List<Product> products = new List<Product>();

using FeedIterator<Product> feedIterator = container.GetItemQueryIterator<Product>(queryDefinition);

while (feedIterator.HasMoreResults)

{

FeedResponse<Product> response = await feedIterator.ReadNextAsync();

foreach (Product product in response)

{

products.Add(product);

}

}

// Process your search results

foreach (Product product in products)

{

Console.WriteLine($"Product Id: {product.Id}, Name: {product.Name}");

}

}

}

}

]]>

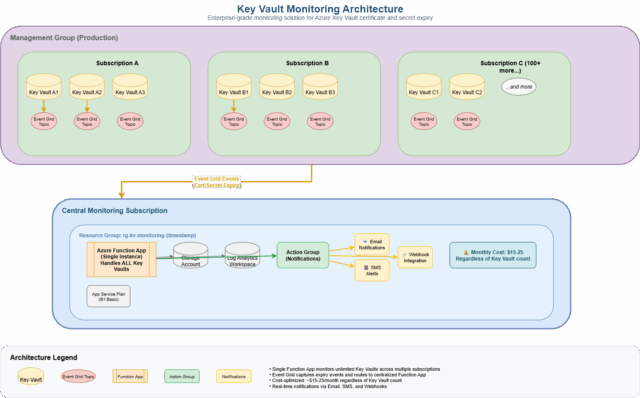

How to monitor hundreds of Key Vaults across multiple subscriptions for just $15-25/month

The Challenge: Key Vault Sprawl in Enterprise Azure

If you’re managing Azure at enterprise scale, you’ve likely encountered this scenario: Key Vaults scattered across dozens of subscriptions, hundreds of certificates and secrets with different expiry dates, and the constant fear of unexpected outages due to expired certificates. Manual monitoring simply doesn’t scale when you’re dealing with:

- Multiple Azure subscriptions (often 10-50+ in large organizations)

- Hundreds of Key Vaults across different teams and environments

- Thousands of certificates with varying renewal cycles

- Critical secrets that applications depend on

- Different time zones and rotation schedules

The traditional approach of spreadsheets, manual checks, or basic Azure Monitor alerts breaks down quickly. You need something that scales automatically, costs practically nothing, and provides real-time visibility across your entire Azure estate.

The Solution: Event-Driven Monitoring Architecture

Single Function App, Unlimited Key Vaults

Instead of deploying monitoring resources per Key Vault (expensive and complex), we use a centralized architecture:

Management Group (100+ Key Vaults)

↓

Single Function App

↓

Action Group

↓

Notifications

This approach provides:

- Unlimited scalability: Monitor 1 or 1000+ Key Vaults with the same infrastructure

- Cross-subscription coverage: Works across your entire Azure estate

- Real-time alerts: Sub-5-minute notification delivery

- Cost optimization: $15-25/month total (not per Key Vault!)

How It Works: The Technical Deep Dive

1. Event Grid System Topics (The Sensors)

Azure Key Vault automatically generates events when certificates and secrets are about to expire. We create Event Grid System Topics for each Key Vault to capture these events:

Event Types Monitored:

• Microsoft.KeyVault.CertificateNearExpiry

• Microsoft.KeyVault.CertificateExpired

• Microsoft.KeyVault.SecretNearExpiry

• Microsoft.KeyVault.SecretExpired

The beauty? These events are generated automatically by Azure – no polling, no manual checking, just real-time notifications when things are about to expire.

2. Centralized Processing (The Brain)

A single Azure Function App processes ALL events from across your organization:

// Simplified event processing flow

eventGridEvent → parseEvent() → extractMetadata() →

formatAlert() → sendToActionGroup()

Example Alert Generated:

{

severity: "Sev1",

alertTitle: "Certificate Expired in Key Vault",

description: "Certificate 'prod-ssl-cert' has expired in Key Vault 'prod-keyvault'",

keyVaultName: "prod-keyvault",

objectType: "Certificate",

expiryDate: "2024-01-15T00:00:00.000Z"

}

3. Smart Notification Routing (The Messenger)

Azure Action Groups handle notification distribution with support for:

- Email notifications (unlimited recipients)

- SMS alerts for critical expiries

- Webhook integration with ITSM tools (ServiceNow, Jira, etc.)

- Voice calls for emergency situations.

Implementation: Infrastructure as Code

The entire solution is deployed using Terraform, making it repeatable and version-controlled. Here’s the high-level infrastructure:

Resource Architecture

# Single monitoring resource group

resource "azurerm_resource_group" "monitoring" {

name = "rg-kv-monitoring-${var.timestamp}"

location = var.primary_location

}

# Function App (handles ALL Key Vaults)

resource "azurerm_linux_function_app" "kv_processor" {

name = "func-kv-monitoring-${var.timestamp}"

service_plan_id = azurerm_service_plan.function_plan.id

# ... configuration

}

# Event Grid System Topics (one per Key Vault)

resource "azurerm_eventgrid_system_topic" "key_vault" {

for_each = { for kv in var.key_vaults : kv.name => kv }

name = "evgt-${each.key}"

source_arm_resource_id = "/subscriptions/${each.value.subscriptionId}/resourceGroups/${each.value.resourceGroup}/providers/Microsoft.KeyVault/vaults/${each.key}"

topic_type = "Microsoft.KeyVault.vaults"

}

# Event Subscriptions (route events to Function App)

resource "azurerm_eventgrid_event_subscription" "certificate_expiry" {

for_each = { for kv in var.key_vaults : kv.name => kv }

azure_function_endpoint {

function_id = "${azurerm_linux_function_app.kv_processor.id}/functions/EventGridTrigger"

}

included_event_types = [

"Microsoft.KeyVault.CertificateNearExpiry",

"Microsoft.KeyVault.CertificateExpired"

]

}

CI/CD Pipeline Integration

The solution includes an Azure DevOps pipeline that:

- Discovers Key Vaults across your management group automatically

- Generates Terraform variables with all discovered Key Vaults

- Deploys infrastructure using infrastructure as code

- Validates deployment to ensure everything works

# Simplified pipeline flow

stages:

- stage: DiscoverKeyVaults

# Scan management group for all Key Vaults

- stage: DeployMonitoring

# Deploy Function App and Event Grid subscriptions

- stage: ValidateDeployment

# Ensure monitoring is working correctly

Cost Analysis: Why This Approach Wins

Traditional Approach (Per-Key Vault Monitoring)

100 Key Vaults × $20/month per KV = $2,000/month

Annual cost: $24,000

This Approach (Centralized Monitoring)

Base infrastructure: $15-25/month

Event Grid events: $2-5/month

Total: $17-30/month

Annual cost: $204-360

Savings: 98%+ reduction in monitoring costs

Detailed Cost Breakdown

| Component | Monthly Cost | Notes |

|---|---|---|

| Function App (Basic B1) | $13.14 | Handles unlimited Key Vaults |

| Storage Account | $1-3 | Function runtime storage |

| Log Analytics | $2-15 | Centralized logging |